Insights & News

The latest insights and perspectives from our team

AI Detectors in Education: Protect Academic Integrity in the Age of ChatGPT & Claude

Discover why AI detectors are becoming essential for education. Learn how institutions can detect AI-generated content, ensure fair assessment, and protect academic integrity in the era of ChatGPT and Claude.

AI Moderation & Content Safety: Detecting Emerging Threats

Discover how AI moderation adapts to deepfakes, synthetic media, and evolving online threats using multimodal analysis and real-time detection.

From Toxicity to Deepfakes: Multi-Modal AI Detection Explained

Learn how multi-modal AI detects toxicity, deepfakes, synthetic audio, and manipulated media across text, images, video, and audio to secure digital platforms.

AI Detection of Edited Media: Challenges & Solutions

Learn the top challenges in detecting manipulated and edited media—and how AI systems identify deepfakes, tampered images, and synthetic content at scale.

AI Video Moderation Explained: Frame-by-Frame Analysis

Learn how AI analyzes video frame by frame to detect harmful, illegal, and policy-violating content in real time. Discover how Detector24 enables scalable video moderation.

The Risks of AI-Generated Content on Social Media

AI-generated images, videos, audio, and text are reshaping social media. Explore the risks, real-world impacts, and why detection now matters.

AI Detection for Educators & Publishers

Learn how educators and publishers can use AI detection responsibly—combining policy, human review, and detector24 signals to protect authenticity and trust.

The Risks of AI-Generated Content on Social Media

Learn how AI-generated images, videos, audio, and text are reshaping social media. Explore the risks, real-world impacts, and why detection now matters.

Face Redaction of Minors in Images API

Publishing images that contain unredacted faces of minors on social media or digital platforms carries significant legal, financial, and reputational risk. Global regulations such as COPPA (US), GDPR (EU), and the UK Age Appropriate Design Code treat a child’s face as personal—and often biometric—data, requiring strict safeguards and, in many cases, verified parental consent. Non-compliance can result in multi-million-dollar fines, regulatory enforcement, civil liability, and long-term brand damage.

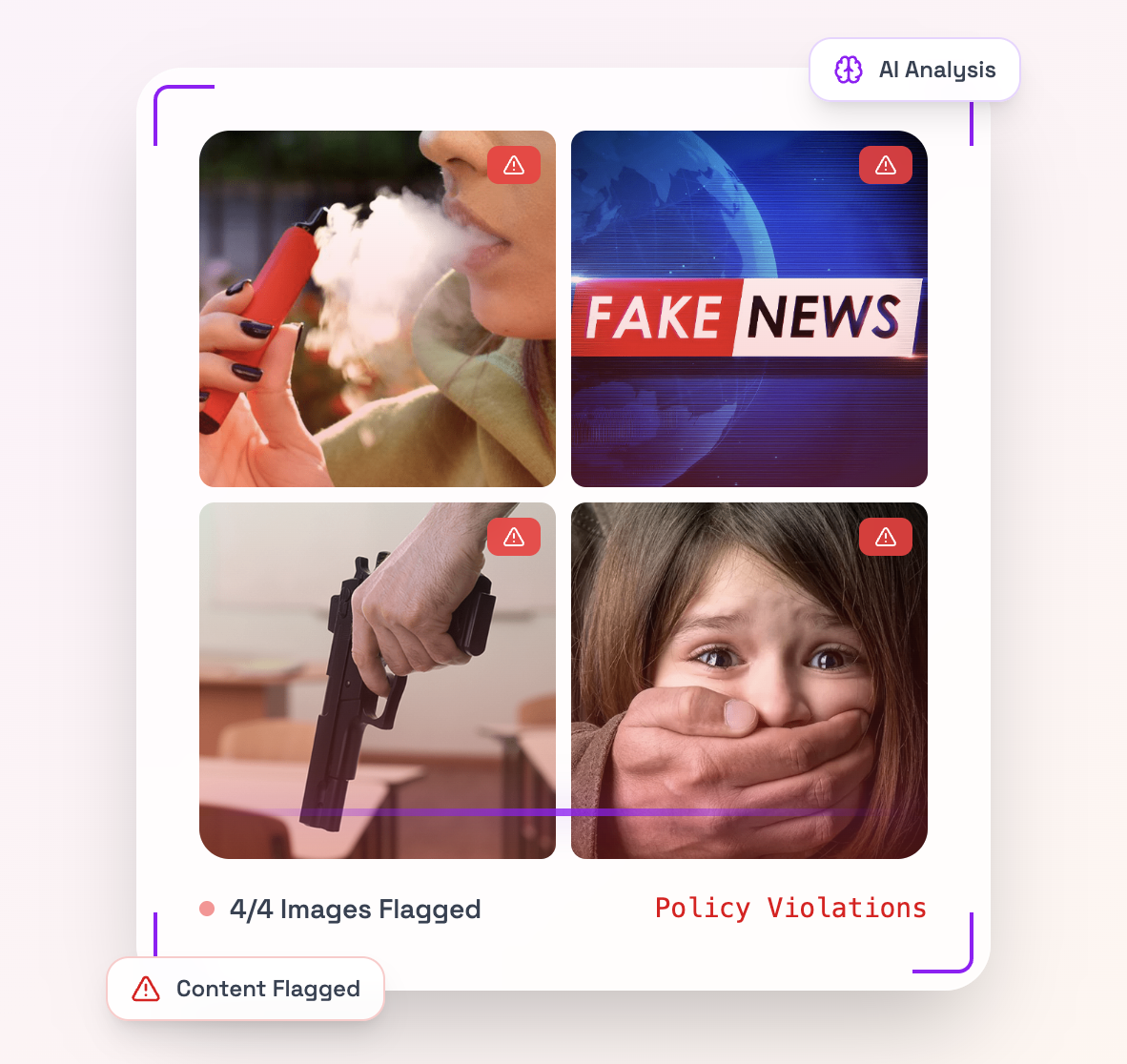

AI Content Moderation with Detector24: A Comprehensive Guide to Moderation Models

In today’s digital landscape, content moderation is essential for maintaining safe and positive online communities. Every minute, users upload an enormous volume of content – for example, more than 500 hours of video are added to YouTube each minute.

AI Content Moderation: Technical Guide to Detector24’s Text Moderation Models

Build safer communities with AI Content Moderation. Learn how Detector24’s text moderation models detect scams, PII leaks, misinformation, AI‑generated text, mental‑health crisis signals, and sentiment—plus practical workflow tips.

Python C2PA Tutorial: Verifying Image Authenticity and Detecting Tampering

In today's digital landscape, AI-generated and manipulated images are becoming increasingly sophisticated and widespread. This raises critical questions about authenticity and trust: How can we verify that an image is genuine and detect if it has been tampered with?