AI Edited Image Forgery Detection

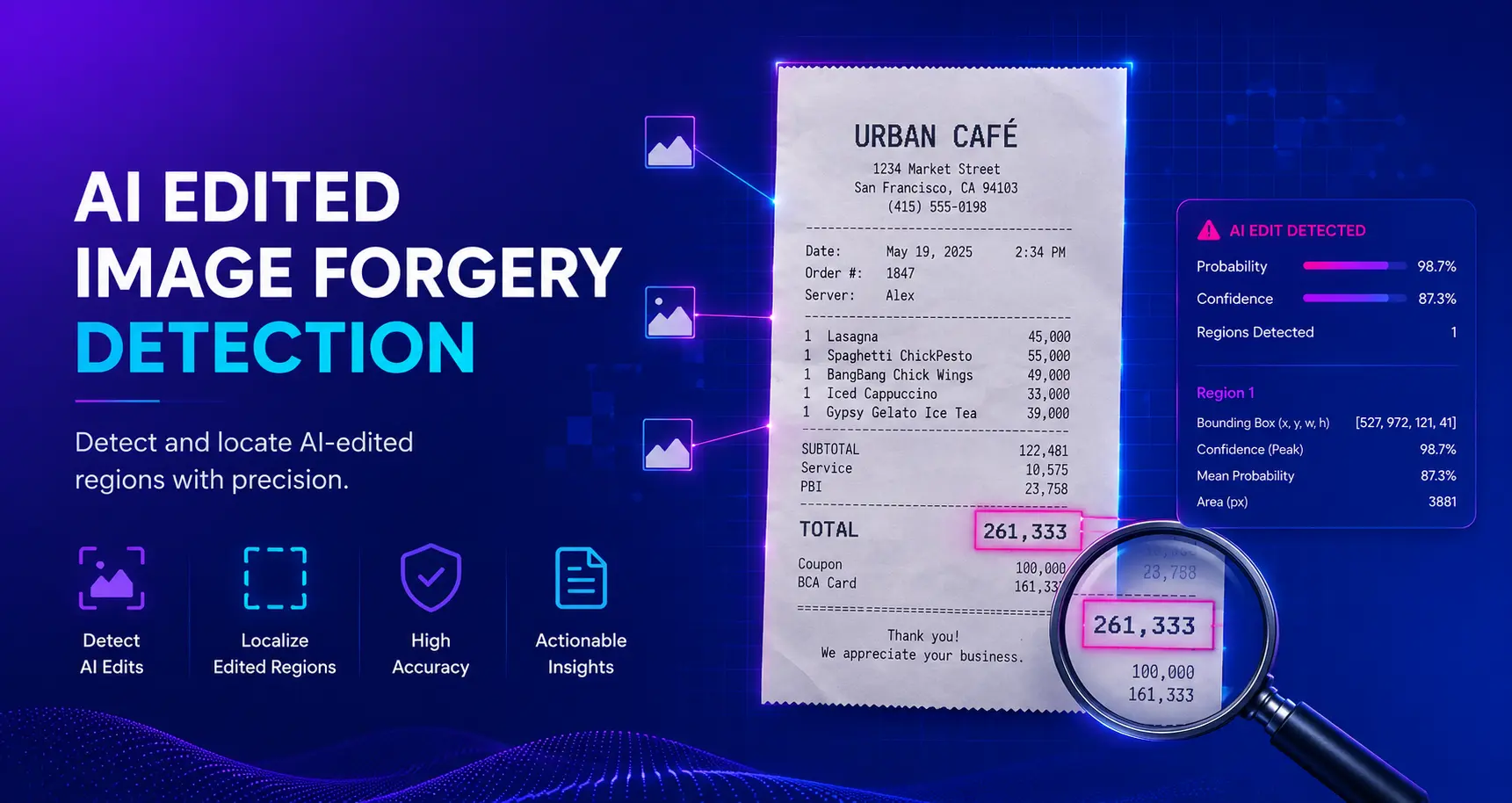

Locate AI-edited regions in images with precise bounding boxes. Catches surgical edits — altered receipt totals, faked insurance damage, swapped ID fields — that whole-image AI detectors miss. Detects edits from Nano-Banana, Flux Kontext, GPT-4o, Qwen-Image-Edit, Bagel, Step1X-Edit, TextFlux and more.

Bynn AI Edited Image Forgery Detection

The Bynn AI Edited Image Forgery Detection model identifies which specific regions of an image have been altered by modern AI image editors. Unlike whole-image AI detectors that only tell you whether an image looks synthetic, this model draws a precise bounding box around every region that has been repainted — so investigators can see exactly what changed: which dollar amount on a receipt, which scratch on a car, which line item on an invoice, which field on a driver's license.

The Challenge

Image manipulation used to require Photoshop skills, time, and a careful eye. In 2026 it requires a sentence. A user opens Google Nano-Banana, Flux Kontext, GPT-4o Image, Qwen-Image-Edit, Bagel, Step1X-Edit, or TextFlux, uploads a real photograph, types "change the total to $4,800" or "add a dent to the front bumper", and seconds later receives a new image where only that one region has been seamlessly repainted. The lighting matches. The shadows match. The texture matches. Every pixel outside the edit is byte-identical to the original. To the human eye, the result is indistinguishable from an authentic photograph.

This has rewired the economics of fraud. Insurance carriers report a sharp rise in suspicious claim photos: hailstorm damage added to a roof that was inspected clean weeks earlier, fender dents appearing only in the submitted photo, water damage extending exactly to where a covered policy boundary ends. Each edit takes 30 seconds and a $20 subscription. Each successful claim pays thousands. The math overwhelmingly favors the fraudster.

Expense and accounts payable fraud has followed the same curve. A consultant inflates a $80 client dinner to $480 by editing one digit. A contractor submits a vendor invoice where the line item descriptions are real but the totals have been raised by 15%. A traveler submits a hotel receipt where the dates have been shifted to fall inside a covered trip window. The receipts look authentic because they are authentic — only one number changed. Manual review cannot keep up; the edits are surgical, the originals are unavailable for comparison, and finance teams approve thousands of receipts a month.

Identity fraud has the highest stakes. AI editors can change a date of birth on a passport, swap a photo on a driver's license, alter the address on a utility bill, or modify the issue date on an ID — without disturbing any of the security features, fonts, or background graphics that normally trip up forgers. KYC reviewers see a document that passes every visual check; only the one piece of information that mattered to the fraudster has been changed.

Editorial integrity is at risk too. A news photo where one person has been edited out of a sensitive scene. A product photo on a marketplace where a defect has been removed. A dating profile where blemishes, tattoos, or accessories have been quietly altered. A court exhibit where a key piece of evidence has been added or erased. Conventional AI-image detectors, trained to recognize fully synthetic images, look at these photos and report "authentic" — because 99% of the pixels really are. The edit hides in the 1%.

Detecting these surgical edits requires a different kind of model: one that does not just classify the whole image, but actively localizes which pixels were repainted. That is what Bynn AI Edited Image Forgery Detection does.

How It Works

- Triple-stream encoding: Three parallel deep encoders process the image at once — a Main RGB branch, a Frozen RGB reference branch, and a Noise branch operating on a high-pass-filtered view that exposes editing residuals invisible in RGB.

- Contrastive feature alignment: An asymmetric contrastive loss pulls authentic pixels together in feature space while pushing edited pixels apart, even when the visible difference between them is microscopic.

- Pixel-level segmentation: The fused features feed a 4-conv head that produces a per-pixel forgery probability map. A 0.40 threshold (calibrated for best F1) binarizes the map.

- Region extraction: Connected components in the binary map are emitted as bounding boxes with per-region peak and mean confidence — so reviewers can navigate from "this image was edited" to "this specific 41×121-pixel area on the receipt was repainted".

- Editor-agnostic generalization: Trained across Nano-Banana, Bagel, Kontext, GPT-4o, Qwen-Image-Edit, TextFlux, Step1X-Edit, Gemini, GPT-Image-2 and Ideogram-v2-Edit, the model has learned what an edit is, not what any one editor looks like.

Response Structure

- is_forged: Boolean — true if AI editing was detected anywhere in the image. False does NOT mean the image is authentic — only that this model found no evidence of AI-driven local editing.

- forgery_probability: Float (0.0-1.0) — global probability that the image contains AI edits

- confidence: Float (0.0-1.0) — model confidence in the classification

- label: String —

"forged"or"no_forgery_detected". The negative label only means this model found no edits; it is not a certification of authenticity. - num_regions: Integer — count of distinct edited regions detected

- regions: Array of bounding boxes, one per detected edit. Each region contains:

- x, y: Top-left pixel coordinates of the bounding box

- width, height: Bounding box dimensions in pixels

- bbox: Convenience array

[x, y, width, height] - confidence: Peak per-pixel forgery probability inside the region (0.0-1.0)

- mean_probability: Average per-pixel forgery probability inside the region

- area: Number of forged pixels inside the region

Example response:

{

"is_forged": true,

"forgery_probability": 0.997,

"confidence": 0.886,

"label": "forged",

"num_regions": 1,

"regions": [

{

"x": 527, "y": 972, "width": 121, "height": 41,

"bbox": [527, 972, 121, 41],

"confidence": 0.997,

"mean_probability": 0.886,

"area": 3881

}

]

}CodePerformance Metrics

Evaluated on a held-out test split spanning nine editor families with threshold-calibrated inference (best F1 operating point):

| Metric | Score |

|---|---|

| F1 | 0.7447 |

| IoU | 0.5932 |

| Precision | 0.7429 |

| Recall | 0.7464 |

Per-editor IoU:

| Editor | F1 | IoU |

|---|---|---|

| Qwen-Image-Edit (Non-Asian portraits) | 0.8672 | 0.7656 |

| Qwen-Image-Edit (Asian portraits) | 0.8671 | 0.7654 |

| Gemini + Ideogram + GPT-Image | 0.8116 | 0.6829 |

| Flux Kontext | 0.7484 | 0.5980 |

| Nano-Banana | 0.6031 | 0.4318 |

| TextFlux | 0.5646 | 0.3934 |

| Bagel | 0.5517 | 0.3810 |

| GPT-4o | 0.4374 | 0.2800 |

Detected Edit Types

Object & Content Edits

- Object addition (inserting new items into a scene)

- Object removal (content-aware fill, inpainting)

- Object replacement and attribute swap

- Background replacement and scene restyling

Text & Numeric Edits

- Replacing numbers (totals, dates, amounts) on receipts, invoices, IDs

- Substituting names, addresses, or codes

- Removing or adding watermarks, stamps, signatures

Face & Body Edits

- Facial attribute changes (age, expression, accessories)

- Adding or removing skin marks, bruises, tattoos

- Local face swaps not involving the whole face

Use Cases

- Insurance claim photos: Surface added damage on vehicle, property, or medical claim photos. Localize the exact dent, crack, scorch mark, or bruise that was AI-edited into the scene — and the exact pre-existing damage that was AI-removed to inflate a "new damage" claim.

- Receipt and expense fraud: Flag the precise digit, line item, date, or merchant name that was altered on a reimbursement receipt before AP approves payment. Localized evidence — not just a probability — makes finance review fast and defensible.

- KYC and identity documents: Detect altered date-of-birth, photo, name, address, expiry date, or issuing-authority text on IDs, passports, driver's licenses, and proof-of-address documents. See exactly which field has been repainted — not just that "something looks off".

- Accounts payable and vendor invoices: Verify that line-item amounts, quantities, and PO numbers on supplier invoices have not been edited between issue and submission. Particularly valuable for contractor and reimbursable-cost workflows.

- Marketplace and listings moderation: Detect when sellers have edited out product defects, removed competitor watermarks, or restyled product photos to misrepresent condition.

- Newsroom and content provenance: Verify whether photojournalism submissions have been locally altered — people removed from sensitive scenes, license plates added, signs reworded — before publication.

- Dating and trust platforms: Identify edited profile photos where blemishes, accessories, body proportions, or co-subjects have been altered, undermining identity verification.

- Legal and forensic review: Provide explainable, region-level evidence for court exhibits — the model shows where the edit is, not just that there is one.

- Layered with AI-generated detection: Pair with the Bynn AI-Generated Image Detection model — that one catches fully synthetic images; this one catches the surgical edits to real photographs that whole-image classifiers miss.

Known Limitations

- Heavy compression: Aggressive JPEG re-saving (Q<60) destroys the high-frequency residuals the model relies on.

- Style transfer: Whole-image style changes leave a uniform "edit" signal across every pixel, blurring the localization signal — those images are better handled by AI-Generated Image Detection.

- GPT-4o edits: Performance is weakest on GPT-4o image edits (IoU 0.28). Pair with additional fraud signals for these.

- Editor coverage: Trained across nine editor families through January 2026. Brand-new editors may produce different residuals — the model still generalizes well, but accuracy on a brand-new editor cannot be guaranteed until evaluated.

- Whole-image generation: This model localizes edits to real photographs, not entirely synthetic images. Use the Bynn AI-Generated Image Detection model for that.

- Screenshot capture: Documents captured via screenshot have lost the original compression signature, which reduces localization accuracy.

Disclaimers

- Probability scores, not legal proof: Detections are evidence to be reviewed, not a final verdict.

- False positives possible: Heavy filtering, unusual textures, or lossy capture can occasionally produce spurious regions; provide an appeal path.

- Combine signals: Pair with AI-Generated Image Detection, Document Tampering Detection, OCR-based field cross-checks, and metadata analysis for the most robust fraud workflows.

- Human review: High-stakes decisions (insurance approvals, fraud prosecution, KYC denials) should always include human verification of the flagged regions.

Best practice: Run AI-Generated Image Detection first to catch fully synthetic images. For images that pass the synthetic check, run this model to surface the surgical edits that whole-image classifiers cannot see. Together they cover both ends of the AI-fraud spectrum — pure synthesis and prompt-driven retouching.

API Reference

Input Parameters

Pixel-level localization of AI-edited regions in images. Triple-stream ICL-Net trained on PromptForge-350k.

image_urlstringURL of the image to analyze for AI-edited regions

https://example.com/image.jpgbase64_imagestringBase64-encoded image data

/9j/4AAQSkZJRgABAQAA...Response Fields

Forgery localization with binary verdict and region bounding boxes

is_forgedbooleanTrue if AI editing was detected anywhere in the image. False means this model found no evidence of editing — NOT a guarantee that the image is authentic.

trueforgery_probabilityfloatProbability that the image contains AI edits (0.0-1.0)

0.87confidencefloatModel confidence in the classification (0.0-1.0)

0.91labelstringClassification label. "no_forgery_detected" only means this model found no evidence of editing — pair with AI-Generated Image Detection and Document Tampering Detection for a full picture.

forgednum_regionsintegerNumber of distinct edited regions detected

2regionsarrayBounding boxes for edited regions. Each region: x, y, width, height, bbox [x, y, w, h], confidence (peak per-pixel probability), mean_probability, area (forged pixel count).

[

{

"x": 100,

"y": 150,

"width": 200,

"height": 120,

"bbox": [

100,

150,

200,

120

],

"confidence": 0.99,

"mean_probability": 0.88,

"area": 3881

}

]Complete Example

Request

{

"model": "ai-edited-image-forgery",

"image_url": "https://example.com/edited_photo.jpg"

}Response

{

"success": true,

"data": {

"is_forged": true,

"forgery_probability": 0.87,

"confidence": 0.91,

"label": "forged",

"num_regions": 2,

"regions": [

{

"x": 100,

"y": 150,

"width": 200,

"height": 120,

"bbox": [

100,

150,

200,

120

],

"confidence": 0.99,

"mean_probability": 0.88,

"area": 3881

},

{

"x": 420,

"y": 80,

"width": 90,

"height": 60,

"bbox": [

420,

80,

90,

60

],

"confidence": 0.95,

"mean_probability": 0.81,

"area": 1240

}

]

}

}Additional Information

429 HTTP error code along with an error message. You should then retry with an exponential back-off strategy, meaning that you should retry after 4 seconds, then 8 seconds, then 16 seconds, etc.Ready to get started?

Integrate AI Edited Image Forgery Detection into your application today with our easy-to-use API.