Protecting Children's Privacy on Social Media

Introduction: In the age of social media and instant content sharing, photographs of children appear online every day. However, failing to redact or blur minors’ faces in those images can lead to serious legal consequences and privacy violations. Businesses and developers alike are increasingly concerned with complying with child privacy laws such as COPPA, GDPR, and the UK’s Age Appropriate Design Code. These regulations impose strict requirements on handling children’s images, and non-compliance can result in massive fines or even legal action. For instance, Instagram was fined €405 million for mishandling children’s personal data on its platform. Clearly, organizations need solutions to avoid such outcomes. This is where an automated face redaction of minors in images API comes into play. By leveraging AI to blur young faces in photos while leaving adults unmasked, platforms can protect children’s identities at scale and adhere to global privacy regulations.

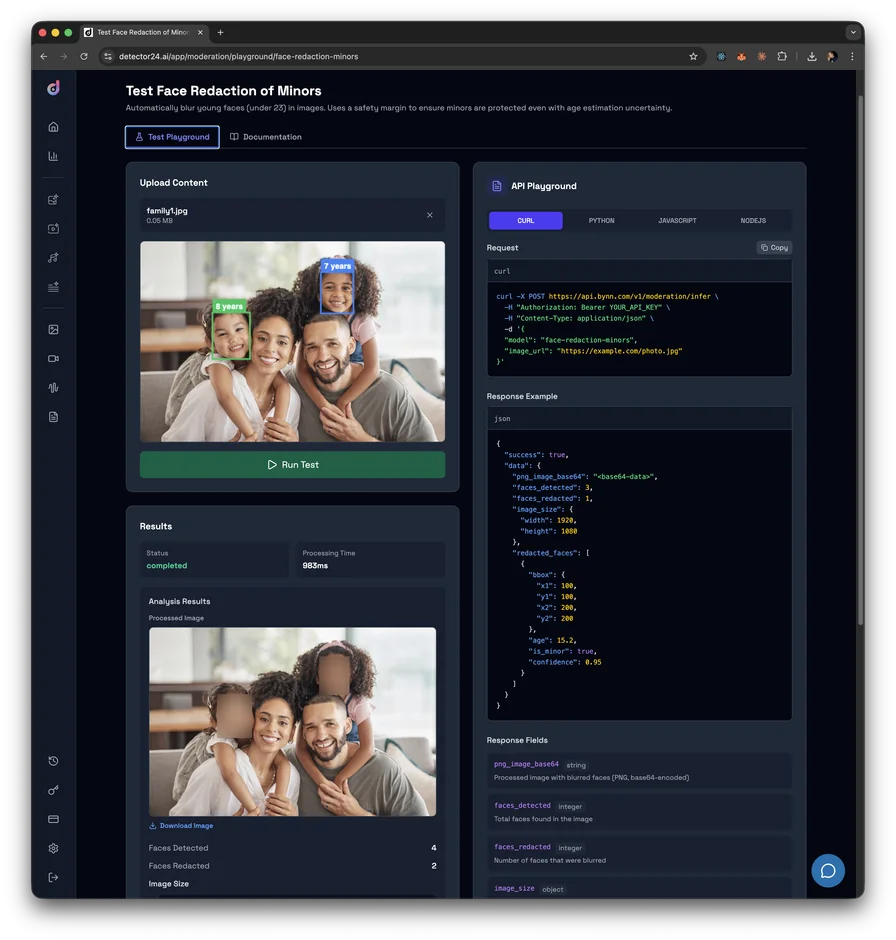

In this article, we explore the legal impact of not redacting minors’ faces on social media images and introduce Detector24.ai’s Bynn Face Redaction of Minors – an AI-driven solution that automatically blurs underage faces. We’ll examine the challenge from a legal perspective, how this technology works, and why it’s a game-changer for businesses and developers aiming to protect children’s privacy online.

The Challenge: Legal Risks of Unredacted Child Faces

Child Privacy Laws and Penalties: Child privacy protection has become one of the most critical – and legally consequential – challenges in digital content today. Every major jurisdiction has enacted strict protections for minors’ images. In the United States, the Children’s Online Privacy Protection Act (COPPA) prohibits collecting personal information from children under 13 without parental consent, and that definition of personal information explicitly includes a child’s photo or image. In the European Union, the GDPR requires explicit parental consent for processing children’s data (typically for minors under 16, with some country variation). GDPR violations can lead to fines up to €20 million or 4% of global revenue, and regulators have not hesitated to penalize companies for mishandling kids’ data. The UK’s Age Appropriate Design Code (Children’s Code) similarly mandates privacy-by-default for online services likely to be accessed by kids, backed by GDPR-level fines for non-compliance. In short, failing to protect minors’ data carries enormous financial risk – potentially tens of millions in fines – and could even lead to criminal penalties in extreme cases.

Editorial Dilemmas for News Media: News organizations face a difficult dilemma when dealing with images or footage that include minors. Consider a school tragedy, a youth sports championship, or a community event – all are newsworthy stories that may involve children on camera. Most countries’ journalistic standards (and often laws) prohibit identifying child victims of crimes or accidents without parental consent. Many jurisdictions also forbid naming or showing the faces of juvenile suspects. This means media outlets must blur minors’ faces or withhold names even under intense public interest. Manually identifying and blurring every minor’s face in breaking news footage is a race against the clock – competitors might publish quickly, but the law and ethics demand caution. Editors are caught between legal compliance and being first to publish. If a minor’s face is aired unredacted, it could violate privacy laws or media regulations, leading to sanctions or public backlash.

Social Media Platforms’ Liability: Social networks and content-sharing platforms process billions of images containing children. People routinely upload photos from birthday parties, vacations, or school events – often without obtaining consent from every child’s guardian. From a legal perspective, platforms that host these images become data processors under privacy laws, sharing responsibility for protecting minors who appear without consent. If a platform knowingly allows identifiable images of children to spread without safeguards, it risks regulatory penalties for facilitating unlawful processing of minors’ personal data. Given the sheer volume of user uploads, this scenario creates a daunting compliance challenge for any company without automated protections.

Schools, Sports, and Community Groups: It’s not just tech companies that must worry about minors’ faces in content. Schools and youth organizations produce many photos and videos of children, but not every parent agrees to have their child identified. For example, a class photo or a sports game snapshot can include kids from multiple families, some of whom have opted out of being pictured. Blanket redaction of all faces (blurring everyone) would destroy the value of these images, but manually blurring only certain faces is error-prone, time-consuming, and expensive at scale. Without an automated tool, ensuring each photo respects every child’s privacy consent is nearly impossible – one mistake could mean a privacy violation.

Exploitation and Security Concerns: Public images of minors can unfortunately be misused by predators or traffickers. Even innocent family photos could enable predatory behavior if a child’s face is identifiable and searchable online. By blurring minor faces, organizations remove a tool that bad actors might use – such as facial recognition or simple social media scouting – to target or track children. In this sense, protecting kids’ identities isn’t only about legal compliance; it’s also an important safety measure in the digital age.

Technical Challenges of Selective Blurring: Implementing “minors-only” face blurring is more complex than standard face blurring. A system must estimate each detected person’s age from their facial features – a task with inherent uncertainty, especially around the teenage years. The difference between a 17-year-old and an 18-year-old can be subtle, yet legally crucial. A missed minor (false negative) means leaving a child exposed (and a liability), whereas blurring an adult by mistake (false positive) can degrade user experience. Any reliable solution needs to err on the side of caution to minimize these risks. One effective strategy is to use a higher age cutoff as a buffer. For example, Detector24’s Bynn Face Redaction of Minors model blurs all faces estimated to be under about 23 years old – providing roughly a five-year safety margin above the age of majority. This conservative approach ensures that nearly all actual minors will be caught by the filter. A few young-looking adults might get blurred unnecessarily, but that’s a worthwhile trade-off if it means no child’s face is left unprotected.

Automated Solution: Detector24.ai’s Bynn Face Redaction of Minors

To address these challenges, Detector24.ai offers an automatic image redaction tool specifically designed for minors’ faces. The Bynn Face Redaction of Minors is essentially a face redaction API that uses artificial intelligence to selectively blur young faces while keeping adult faces untouched. This provides a much-needed solution for businesses and developers who need to process images or videos in compliance with child privacy laws. Instead of relying on manual editing or all-or-nothing blurring, this model can be integrated into workflows to automatically protect children in photos at scale.

How It Works: The Bynn model uses a multi-step AI pipeline to detect and redact minors’ faces:

- Face Detection: First, it detects all faces in an image using state-of-the-art computer vision techniques. This step finds the location of each face, regardless of age.

- Age Estimation: Next, for each detected face, the system estimates an age (or age range) using advanced neural networks. Any face predicted to be below the threshold age is marked as a minor.

- Selective Blur: If a face is deemed likely to belong to a young person (under ~23 years old, per the chosen threshold), the software applies a Gaussian blur filter to that face region. The blur is strong enough (e.g. high sigma value) to ensure the face becomes unidentifiable.

- Adult Preservation: Faces estimated to be adults (around 23+ years) are left unblurred. The result is that the image retains context and relevant detail — adult faces remain visible — while all probable minors are safely obscured.

This selective redaction process runs quickly and can handle images in bulk, making it feasible for platforms that need to moderate user content on the fly. Because it’s offered as an API, developers can integrate it into applications or media workflows with a simple call, then get back a processed image that has any minors’ faces automatically blurred out.

Safety Threshold: Why Under 23 Years?

You might wonder why the model blurs faces that appear under 23 years old instead of stopping at 18. This higher cutoff is intentionally chosen to provide a safety margin for the uncertainty in AI-based age estimation. Even the best age prediction algorithms can be off by a few years. By setting the blur threshold a full five years above the typical age of adulthood (18), the system ensures that actual minors are very unlikely to slip through unredacted. In practice, if the AI mistakenly thinks a 17-year-old looks 19 or 20, the 23-year rule will still catch and blur that face. This conservative buffer does mean some young adults (e.g. a 21-year-old who looks youthful) might get blurred unnecessarily, but most organizations prefer to err on the side of caution. Protecting children’s privacy is prioritized over perfect precision. Businesses using this API gain peace of mind, knowing that the risk of leaving a minor’s face unblurred due to an age-estimation error is extremely low.

Developer Insights: API Response and Integration

From a developer’s perspective, integrating a face redaction API like Bynn is straightforward. Detector24’s API takes in an image and returns both the redacted image and useful metadata about the processing. The response structure includes:

- png_image_base64: The processed image with minor faces blurred (as a base64-encoded PNG).

- faces_detected: The total number of faces found in the original image.

- faces_redacted: How many of those faces were classified as young (under threshold) and thus blurred.

- image_size: The original image’s dimensions (width and height).

- redacted_faces: An array of details for each blurred face – including the face’s bounding box coordinates in the image, the age estimate, a flag (true/false) indicating it was considered a minor, and the model’s confidence score in that determination.

This rich metadata allows developers and administrators to not only obtain the anonymized image but also to log or review which faces were redacted. For example, an application could inform a user uploading a group photo that “3 faces were blurred for privacy” as feedback. The bounding box and age information for each redacted face can be used to highlight where blurring occurred, or for an optional human review step if needed (say, to double-check cases where an adult might have been blurred).

Technical Details: Key technical parameters of the model include:

| Parameter | Value |

|---|---|

| Age Threshold | < 23 years (safety margin) |

| Blur Method | Gaussian blur (sigma = 20) |

| Confidence Threshold | 0.2 (minimum probability to treat face as minor) |

| Output Format | PNG (for the returned image) |

| Max File Size | 20 MB (input image size limit) |

| Supported Formats | GIF, JPEG/JPG, PNG, WebP (input types) |

These details show that the model is tuned for a balance of privacy and performance. The relatively low confidence threshold (0.2) makes the system quick to blur in doubtful cases (aligning with the conservative approach). The high blur strength ensures that blurred faces are truly unidentifiable. And with support for common formats and large images, the API is flexible enough to handle real-world content.

Use Cases for Businesses and Developers

An automated minor-face redaction solution unlocks valuable applications across various domains:

- Social Platforms & Apps: Social media networks, photo-sharing sites, and forums can automatically protect children’s identities in user-uploaded content. Whenever a user posts a photo, the platform could run this API to blur any faces that appear under 23, preventing accidental exposure of a child and keeping the platform in compliance.

- Schools and Youth Organizations: Schools, camps, clubs, and other youth programs can batch-process event photos before publishing them. This ensures any child who doesn’t have photo consent on file is automatically anonymized, while still allowing proud parents to see permitted kids in newsletters or galleries.

- News & Media Outlets: News publishers can integrate the tool into their media workflows for both images and videos. Journalists and editors can quickly blur all minor faces in footage, meeting legal and ethical standards for reporting on children without delaying press time. Breaking news can be released without compromising on privacy compliance.

- Developer Tools & Photo Services: Developers building any service that handles user images can offer a “privacy blur” feature using the API. For instance, a cloud storage or photo management app might include an option to bulk-blur faces of minors in a user’s photo collection, catering to privacy-conscious families or organizations.

- Sports and Event Photography: Photographers or event organizers can use the redaction model when sharing photos from youth sporting events, school plays, or community gatherings. Underage participants and bystanders will be blurred, while adult coaches or officials remain visible – striking a balance between documentation and privacy.

These use cases demonstrate that the technology is broadly applicable. Anywhere children’s images might appear, an automatic blurring tool is a smart safeguard to avoid legal landmines and show a commitment to privacy.

Important Considerations and Limitations

While automated face redaction for minors is powerful, it’s important to keep a few considerations in mind:

- Conservative Blurring: Because of the safety margin, the system may blur some young-looking adults (e.g. 18–22 year-olds). This is a deliberate trade-off to ensure no actual minor is missed. It might slightly reduce image precision, but it greatly increases protection.

- Age Estimation Uncertainty: AI age detection isn’t perfect. Factors like lighting, pose, or image quality can affect the age estimate. The ~5-year buffer covers many cases, but not all. It’s wise to monitor how the model performs on your content and adjust the configuration if needed.

- Face Angle & Image Quality: Individuals not looking directly at the camera (for example, faces turned significantly to the side) or very low-resolution faces can be harder to classify accurately. A 16-year-old in profile might be estimated as an adult, or vice versa. Understand that the model handles most cases well, but extreme angles or poor image conditions can be challenging – a manual review might be required for those edge cases.

- Privacy-By-Design Approach: Implementing this API should be part of a broader privacy by design strategy. Ideally, only the redacted images (with blurred faces) should be used for any public-facing content or long-term storage, and access to original, unblurred images should be limited. The face redaction tool is one piece of the puzzle – organizations still need strong policies, user consent practices, and security measures for comprehensive child data protection.

Conclusion

The legal impact of not redacting minors’ faces in social media images cannot be overstated. Hefty fines, lawsuits, and reputational damage await those who ignore child privacy protections. In a world where digital content is ubiquitous, businesses must be proactive about safeguarding children’s identities. The good news is that technology is rising to meet this need. Solutions like Detector24.ai’s Bynn Face Redaction of Minors provide an automated, reliable, and scalable way to ensure every child’s face in an image or video is appropriately blurred. By incorporating a face redaction of minors in images API into their platforms, companies can comply with COPPA, GDPR, and other regulations without crippling their workflow or content. This empowers both legal teams and developers: compliance is handled by design, and engineers can focus on building features while the AI handles privacy in the background.

Ultimately, investing in an automatic minors’ face blurring solution is not just about avoiding penalties – it’s a statement that your organization values and protects children’s privacy. In the eyes of regulators, users, and the public, that commitment goes a long way. With the right tools in place, we can continue to share and innovate in the digital space while keeping those who are most vulnerable – our children – safe from exposure.

Sources: COPPA personal information definition; GDPR fine limits; Instagram child data fine.

Want to learn more?

Explore our other articles and stay up to date with the latest in AI detection and content moderation.

Browse all articles