Video Moderation for Platforms: Frame-by-Frame AI Analysis Explained

Why video content moderation is now a high-risk priority

Video has quietly become the dominant “attack surface” of the modern internet: it is emotionally persuasive, difficult to audit at scale, and highly reusable across platforms (clipped, reposted, mirrored, and recontextualized). The sheer throughput is also unprecedented—YouTube publicly reports “over 20 million videos uploaded daily,” a scale that makes purely manual review structurally impossible. Video content moderation is the process of reviewing and managing videos to ensure they stick to certain standards or guidelines.

At the same time, regulatory expectations have moved from reactive takedowns toward demonstrable, systematic risk management. In the EU, the Digital Services Act (DSA) applies broadly across online platforms and became applicable to all covered services in February 2024, bringing graduated obligations around illegal content handling, transparency, and risk controls. In the US, COPPA continues to impose parental notice/consent obligations when services are directed to children under 13 (or when operators have actual knowledge they’re collecting personal information from children), which becomes relevant when video features enable child-directed content, child audiences, or child creators. Platforms must have a robust content moderation system in place to ensure legal compliance and adherence to platform rules.

A third accelerant is synthetic media. Deepfakes are no longer confined to novelty; they intersect with fraud, impersonation, harassment, and misinformation, and they now appear in both recorded video and livestream contexts. In parallel, voice cloning has matured quickly enough that human listeners can struggle to reliably distinguish cloned voices from authentic ones—raising the stakes for audio moderation and identity spoofing defenses. The risks associated with video content are heightened by its immersive nature, which can lead to the rapid spread of harmful material.

The practical outcome for Trust & Safety, compliance, and platform risk teams is simple: video moderation is no longer a “community guidelines” feature. It is a safety system with legal exposure, operational stress, and reputation risk—especially in short-form and live formats where the window for intervention is measured in seconds. Effective moderation protects the platform's reputation by ensuring compliance with regulations and maintaining user trust.

The goal of video moderation is to filter out inappropriate content, such as violence, explicit material, hate speech, or content that violates a platform's service terms. Managing user-generated content at this scale is a major challenge, requiring platforms to moderate video content using a combination of human moderators and automated tools. Moderation should reflect your platform's purpose and the people who use it, tailoring strategies to the intended audience. The effectiveness of video moderation relies on clear guidelines about what constitutes inappropriate content.

Where traditional moderation fails at user generated content video scale

Traditional video moderation stacks—human review queues, user reporting, and keyword filters—break down for four reasons: throughput, latency, inconsistency, and human cost. Moderation is the process of reviewing and controlling user-generated content to ensure compliance with community standards and prevent violations such as hate speech, violence, or explicit material.

Throughput and latency are the obvious failures. Even if you only review a tiny fraction of uploads, “review everything” collapses under volume; “review later” fails when distribution is immediate and viral, particularly in livestreams or real-time chat/video hybrids. Moderation can happen at different stages: pre moderation (review before publishing), post moderation (review after publishing), and reactive moderation (review triggered by user reports). The DSA’s emphasis on transparency and systemic risk mitigation reinforces that regulators increasingly expect platforms to show how they manage risk, not merely that they remove content after the fact.

User reporting and review queues are essential parts of a content moderation system, allowing users to report content or flag inappropriate content. Flagging videos helps platforms quickly identify and address problematic material, supporting trust & safety protocols.

Inconsistency is the quieter failure mode. Human moderators can be highly accurate on clearly defined categories, but edge cases are unavoidable: context, satire, documentary footage, and culturally specific signals routinely create disagreement. The governance literature on automated moderation highlights that real-world enforcement often boils down to explicit trade-offs between false positives (overblocking) and false negatives (misses), and that naïve “accuracy” metrics can be misleading in low-prevalence harm scenarios. Human oversight remains crucial, especially in hybrid moderation systems that combine AI tools with human review to improve accuracy and maintain user trust.

Finally, there’s the human factor. Research continues to document the psychological toll content moderation work can impose, especially when reviewers are repeatedly exposed to graphic material. This doesn’t just create ethical concerns; it also creates operational brittleness (attrition, burnout, inconsistent decisions under fatigue), which in turn increases platform risk. Post-upload moderation allows content to be reviewed after publication, while reactive moderation relies on users to report inappropriate content, and combining these approaches can enhance safety.

That combination—scale pressure plus fragile human workflows—is why modern platforms increasingly treat automation not as a “nice to have,” but as the only viable way to enforce policy at upload speed while reserving human judgment for the highest-impact decisions. AI tools are now integral to the content moderation system, automating the detection and flagging of inappropriate content to improve efficiency and reduce the risk of false positives or delays that can frustrate users. Hybrid moderation combines the speed of AI with human judgment for better outcomes, allowing platforms to assess video content at scale while ensuring quality and compliance.

Content Moderation and the Digital Services Act

The Digital Services Act (DSA) has fundamentally reshaped the expectations for content moderation on digital platforms operating in the EU. Under the DSA, online services are now required to implement robust content moderation processes that not only remove harmful content—such as hate speech, terrorist content, and explicit material—but also uphold user rights and ensure transparency in how moderation decisions are made. This regulatory shift means that digital platforms must adopt a proactive approach, combining automated moderation tools with the expertise of human moderators to identify and address problematic content swiftly and fairly.

Effective content moderation under the DSA is about more than just compliance; it’s about fostering a respectful online environment where user safety and free speech are both protected. Platforms must be able to demonstrate how their moderation tools and processes work, provide clear explanations for content removal, and offer users meaningful recourse if their content is flagged or taken down. By prioritizing these practices, digital platforms not only protect their users from harmful content but also build trust and safeguard their own reputations in an increasingly regulated landscape.

Ultimately, the DSA makes content moderation a core operational and legal requirement for online services. Platforms that invest in transparent, balanced, and effective moderation processes are better positioned to protect users, maintain a safe online environment, and avoid the legal and reputational risks associated with non-compliance.

What frame-by-frame analysis means in practice

“Frame-by-frame analysis” is not marketing shorthand. It is a specific operational idea: decode a video stream into frames, run model inference on a sequence of images (and often corresponding audio segments), and continuously aggregate what the system learns into a time-aligned risk representation. Moderating video content is significantly more complex than moderating text or images, as it requires analyzing multiple dynamic layers—visuals, audio, and metadata—concurrently to ensure effective video content moderation.

Most consumer video runs at common frame rates such as 24, 25, 30, or 60 frames per second (fps). At 30 fps, you have ~33 milliseconds between frames; at 60 fps, ~16.7 ms. That math is unforgiving, but it also clarifies why “milliseconds matter” in live environments.

Sampling strategy is the first design choice:

- Every-frame scanning maximizes coverage but increases compute costs. It can be warranted for short clips, high-risk categories, or ultra-fast live enforcement.

- Fixed-interval sampling (e.g., every kth frame) reduces cost but risks missing brief events. Sampling-based keyframe approaches are widely discussed in the video understanding literature as a baseline technique, precisely because they are simple—but they are also blunt instruments.

- Intelligent keyframe extraction aims to pick representative or “informative” frames based on motion, shot boundaries, feature change, or learned thresholds—preserving coverage while reducing redundant inference. Modern research increasingly treats this as a first-class preprocessing step for downstream video understanding tasks.

Batch vs. real-time is the second design choice. Batch processing (scan after upload) can be adequate for archives or low-velocity content. But for livestreaming—especially ultra-low latency broadcast paths—platforms may be operating under sub-second delivery objectives. For example, Cloudflare has described WebRTC live delivery with sub-second latency as a product capability, and Apple has presented low-latency HLS modes with “less than two seconds” achievable at scale in public networks.

The key technical point—often missed—is that each analyzed frame becomes a structured dataset. Instead of “a video file,” the platform ends up with a timeline of machine-readable events:

- objects + bounding boxes + confidence,

- extracted text strings + localization,

- face signals (presence, occlusion, liveness cues, approximate age bands),

- scene labels (violence, explicit content, weapons-like objects, etc.),

- temporal features spanning multiple frames (actions, gestures, anomalies),

- audio transcript snippets aligned to timestamp ranges.

AI tools and automated systems review content by scanning frames, tags, audio, and metadata, enabling the detection and flagging of inappropriate content such as nudity, violence, or hate symbols in videos. This structure is what makes real-time decisioning possible: it transforms an unstructured stream into a stream of evidence, supporting the review content process that is central to effective video content moderation.

The frame-level AI toolkit from pixels to policy decisions

Frame-level moderation is rarely one model. It is a model ensemble (or a multimodal foundation model plus specialists) designed to capture different risk signals, because “harm” is not visually uniform. Hybrid moderation combines the efficiency of AI tools with the judgment of human oversight, ensuring that the moderation process is both accurate and adaptable. A platform moderating live commerce, gameplay clips, short-form reaction videos, and community livestreams needs multiple detection primitives that can be combined.

Computer vision object detection

Object detection answers the blunt but critical questions: what is in the frame, and where? Most modern detectors output bounding boxes with confidence scores, enabling downstream logic like “weapon-like object near a person’s hand for >N frames” rather than “weapon appears somewhere once.” The YOLO family is a canonical example of real-time object detection research, framing detection as a single-pass prediction problem and demonstrating practical real-time throughput on benchmark hardware.

AI tools play a crucial role in video moderation by automating the detection and flagging of inappropriate content in videos, such as nudity, violence, or hate symbols. These tools help platforms efficiently process large volumes of user-generated videos and support compliance with safety and legal standards.

In moderation contexts, object detection frequently targets:

- weapons and weapon-adjacent items,

- drugs/paraphernalia and transaction artifacts,

- explicit nudity cues (with careful policy mapping),

- extremist symbols and uniforms,

- fraud or scam props (e.g., QR codes, forged “documents,” giveaway banners).

Detection outputs are often used to flag inappropriate content and flagging videos that contain policy violations is essential for further human or automated review. This process helps maintain platform safety and operational integrity.

The “risk-aware” nuance is that object detection alone is insufficient: a kitchen knife in a cooking stream is not the same as a knife used in threats. That is why detection outputs should be treated as evidence, not an automatic verdict.

Facial and biometric risk analysis

Face-related analysis can support child safety, impersonation defense, and deepfake resilience—but it also introduces privacy and compliance complexity because biometric data can be regulated as sensitive data when used for unique identification. Under GDPR, biometric data is defined as personal data resulting from technical processing relating to physical/physiological/behavioural characteristics enabling unique identification, and biometric processing for uniquely identifying a person is included among special-category data in Article 9. Ensuring legal compliance is critical when handling biometric data, as platforms must adhere to regulations such as GDPR, COPPA, and the Digital Services Act to avoid fines and manage legal risks.

In practice, “facial risk analysis” within video moderation often focuses on risk-limiting signals rather than trying to identify individuals. Human oversight is essential in the moderation process, especially when dealing with sensitive or restricted content, to ensure accuracy and maintain user trust:

- Age estimation / minor presence detection to support child-safety policies and age-gating controls (while recognizing that age estimation is probabilistic and should be used with calibrated thresholds and human review on borderline outcomes). Moderation strategies should also consider the intended audience when applying age estimation or minor detection, balancing safety with the needs and expectations of the platform's user base.

- Occlusion and liveness cues to detect suspicious presentation attacks (e.g., masks, screens, face-covering gestures), which are frequently relevant in creator verification and impersonation defense.

- Deepfake detection signals (covered further below), especially for high-risk domains such as political content, financial scams, romance scams, and live commerce.

When mapping these signals to policy, companies often draft extensive and detailed guidelines for moderators to refer to before reaching a decision, ensuring consistent and fair application of rules.

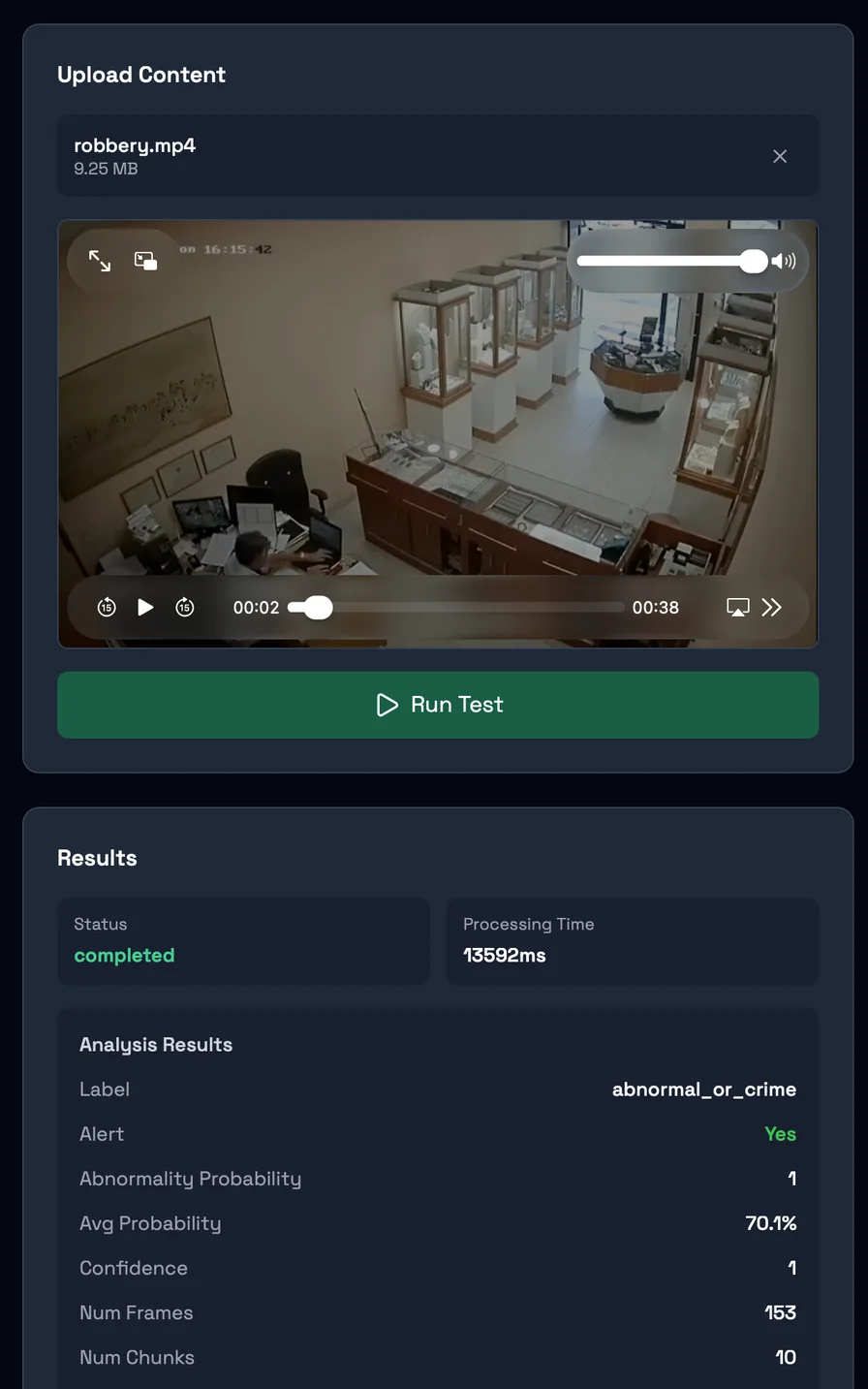

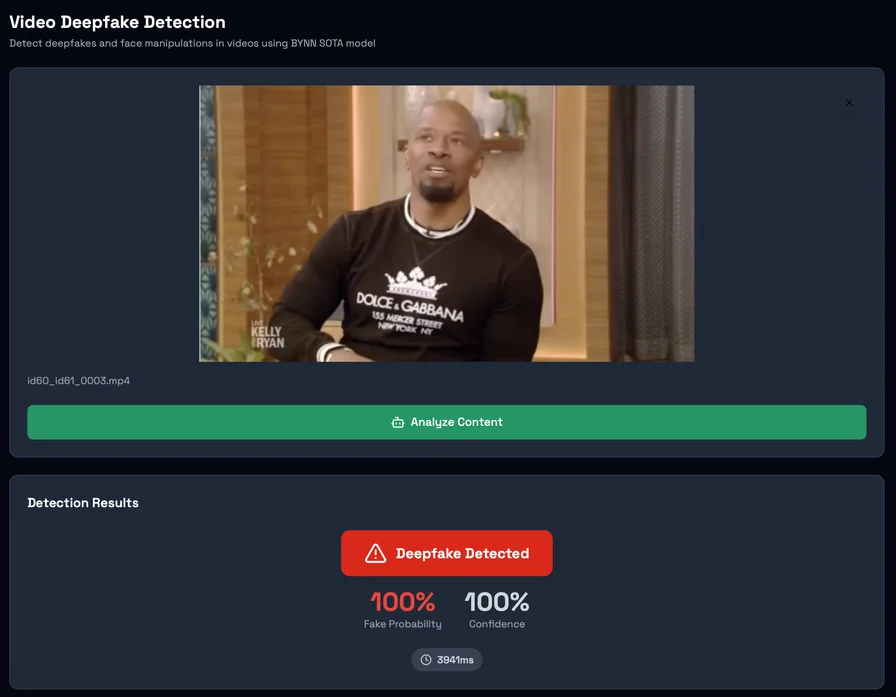

This is also where an infrastructure provider like Detector24 can play a bounded role: offering modular APIs for age detection/minor detection, face occlusion, liveness, and deepfake video detection that a platform can integrate into its moderation pipeline without building every model from scratch—while still keeping policy control and audit logging within the platform.

Optical character recognition for “text inside video”

Bad actors routinely embed policy-violating content in visual text—memes, on-screen captions, scam overlays, hate slogans, or “instructional” graphics—precisely to bypass text-only filters. The computer vision field treats this as scene text detection + recognition, and systems like CRAFT show how detecting text regions (including irregular shapes) can be approached with learned character-region awareness.

As part of the video moderation process, it is essential to review content for text-based policy violations to ensure compliance with platform rules and safety standards.

Why it matters: multimodal hate and harassment detection research has repeatedly shown that unimodal approaches fail on content where meaning emerges from the combination of image and text—hence the creation of benchmarks like the Hateful Memes challenge.

In a moderation stack, OCR outputs are usually fed into:

- profanity/hate/threat classifiers,

- scam pattern detectors (e.g., “send money,” impersonation claims),

- regulated product claims checks,

- links/QR code extraction and risk scoring.

Flagged videos are then reviewed for compliance with platform rules and restricted content policies, with both automated systems and human moderators working together to review content and ensure that sensitive or restricted content is handled appropriately. Community flagging also allows users to report inappropriate or high-risk content that may have bypassed automated filters, further strengthening the moderation process.

Scene and contextual classification

Scene classification asks: what is happening overall? This includes adult content categorization, violence detection, and self-harm risk cues—areas where context and temporal continuity matter, not just single-frame pixels. Moderation processes for scene and contextual classification must ensure adherence to platform rules, including the careful review and flagging of restricted content, to maintain compliance with safety standards and legal requirements.

Violence detection research in video has grown into its own subfield, with surveys mapping methods and datasets for violent behavior detection and emphasizing the need for spatiotemporal modeling rather than single-image inference.

In practice, platforms often implement context layers such as:

- “graphic violence” vs “sports violence,”

- “medical nudity” vs “sexual content,”

- “self-harm ideation content” vs “recovery discussion,”

These context layers require human oversight to ensure nuanced judgment, especially for complex or sensitive cases, and must consider the intended audience to balance safety with free speech and protect the specific user base. A multi-level moderation process is often used, where frontline moderators handle clear-cut cases and escalate more complex scenarios to higher-level moderators for further review.

…because policy enforcement is not merely detection—it is classification under a platform’s ruleset, with appeal pathways and auditability requirements.

Behavioral pattern analysis across time windows

Behavioral analysis is the bridge from “what objects exist” to “what actions occur.” Video action recognition architectures like I3D and SlowFast explicitly model spatiotemporal features, capturing motion patterns and temporal cues that single-frame models miss.

Modern video moderation processes leverage AI tools to analyze behavioral patterns and determine when moderation should happen, whether proactively, reactively, or in real time. Human oversight remains essential, as moderators review flagged behavioral patterns to ensure safety, compliance, and content quality.

This is the layer typically used for:

- suspicious gestures and repeated signaling,

- coordinated activity indicators (e.g., repeated patterns across streams),

- fraud choreography in live commerce (e.g., “flash the QR code,” “move to encrypted messaging,” “show counterfeit proof”).

To reduce exposure to distressing material, moderators often rotate through tasks as part of operational workflows.

Conceptually, the system shifts from point signals (frame t) to interval signals (t…t+Δ), which becomes crucial for livestream enforcement.

The Role of Human Moderators

While automated moderation systems are essential for managing the vast scale of user generated content, human moderators remain a critical part of the moderation process. Human moderators bring the nuance, empathy, and contextual understanding that machine learning models and automated tools often lack—especially when it comes to interpreting satire, cultural references, or borderline cases that require careful judgment. Their role involves reviewing flagged content, making moderation decisions in line with platform guidelines, and ensuring that the moderation process is fair and consistent.

However, the work of human moderators comes with significant challenges. Repeated exposure to harmful content—including graphic violence, hate speech, and other disturbing material—can take a toll on mental health, sometimes leading to conditions such as post traumatic stress disorder (PTSD). To address these risks, digital platforms must provide comprehensive support for their moderation teams. This includes specialized training, access to mental health resources, and clear protocols for escalating particularly distressing cases. It’s also vital that human moderators are empowered to make informed decisions, while being supported by transparent accountability measures.

By investing in the well-being and professional development of human moderators, digital platforms can ensure that their moderation process remains effective, ethical, and resilient—ultimately protecting both users and the people tasked with keeping online spaces safe.

Multimodal fusion and real-time risk scoring engines

A modern content moderation system is increasingly multimodal by default: visual frames, audio, on-screen text, metadata, and account/behavior signals are fused to reduce blind spots. These systems leverage advanced ai tools to automate the detection of inappropriate content, while human reviewers on internal trust and safety teams manually assess flagged video content to ensure accuracy and context.

The empirical motivation is straightforward: unimodal detectors miss meaning that emerges cross-channel. The Hateful Memes benchmark was explicitly built to demonstrate that models relying solely on the image or solely on the text struggle, while multimodal reasoning performs substantially better. Similarly, moderation research on children’s videos has highlighted that visually benign content can carry inappropriate audio, motivating audiovisual fusion approaches.

Audio is a major part of the threat model in livestreams. Speech-to-text systems—especially those trained on very large, diverse datasets—enable near-real-time transcription that can then be scanned for threats, hate, grooming cues, scam scripts, or coercion language. This matters because policy violations often occur as spoken instruction layered over visually innocuous footage.

Once multimodal features exist, platforms typically implement a real-time risk scoring engine:

- Each frame (or short time slice) produces signals with confidences.

- Signals are normalized and combined into risk features.

- A scoring model (rules + ML) produces dynamic risk values over time.

- Thresholds map risk into actions: allow, blur, label, throttle distribution, temporarily block, or escalate to human review.

Human oversight is essential in this moderation process, as hybrid moderation combines the speed and scale of ai tools with the nuanced judgment of human reviewers to handle complex or sensitive cases. This approach helps maintain moderation accuracy and user trust.

Research prototypes like “VideoModerator” describe risk-aware frameworks that integrate multimodal features and highlight high-risk moments on a timeline, which is directly aligned with “frame-by-frame becomes structured evidence” as an operational concept.

In production systems, the decision engine is also the compliance hinge. Under the DSA, platforms may need to generate clear “statements of reasons” for certain moderation actions and submit structured statements into the DSA Transparency Database; the Commission’s documentation emphasizes that statements should be clear and specific. That nudges platform architecture toward auditable decisioning: storing which signals triggered which actions, under which policy mapping, at which timestamps. Legal compliance is a critical aspect, requiring moderation processes to adapt to evolving regulatory obligations and coordinate effectively among different teams.

Regular transparency reports showing flagged content, removals, and error rates are valuable for demonstrating that the moderation process is thoughtful and not arbitrary. Effective moderation also depends on establishing clear community guidelines and transparent processes for content removal, helping users understand the standards and actions taken.

Detector24’s positioning fits naturally here when treated as infrastructure rather than a “policy brain”: it can supply modular detection models (image/video/text/audio and deepfake signals) and support real-time categorization, while the platform retains final policy thresholds, escalation logic, and appeals handling. Detector24’s published catalogue and model pages describe components relevant to this workflow—such as deepfake video detection, age/minor detection, face occlusion signals, and broader moderation model libraries—making it plausible as a building block in a larger, platform-owned decision engine.

Industry Use Cases for Content Moderation

Content moderation is a foundational practice across a wide range of industries that rely on user generated content. Social media platforms, for example, use content moderation to detect and remove hate speech, explicit content, and terrorist content, helping to maintain a safe online environment and comply with legal requirements. On online gaming platforms, moderation is essential for curbing harassment, toxic behavior, and cheating, ensuring that gameplay remains fair and enjoyable for all participants.

Digital services such as online marketplaces, forums, and community platforms also depend on content moderation to filter out spam, phishing attempts, and other forms of malicious or inappropriate content. By implementing robust moderation systems, these industries can protect their users from harmful material, uphold community standards, and meet the legal obligations that come with operating large-scale online platforms.

In every case, effective content moderation is not just about compliance—it’s about building user trust, protecting brand reputation, and creating a safe online environment where communities can thrive. As the content moderation industry continues to evolve, these use cases highlight the importance of adapting moderation processes to the unique challenges and risks faced by each sector.

Deepfakes and synthetic media as a moving target for harmful content

Deepfake and synthetic media moderation is not one problem; it’s a family of problems:

- face swaps and identity puppeteering,

- lip-sync manipulation,

- fully synthetic “generated” scenes,

- voice cloning and audio deepfakes,

- hybrid attacks (real video + synthetic overlays + synthetic voice).

A major challenge in moderating deepfakes and synthetic media is the sheer volume and sophistication of content being produced in real time. This requires a robust moderation process that leverages advanced ai tools for automated detection, while also relying on human reviewers and human oversight to handle nuanced or sensitive cases.

Surveys in digital forensics describe deepfake detection approaches that exploit spatial artifacts (texture, blending boundaries), frequency-domain cues, and temporal inconsistencies (frame-to-frame discontinuities), and they emphasize that generalization to “in-the-wild” content is one of the central challenges. Specific research lines focus on cues such as lighting inconsistency, abnormal motion patterns, and other subtle signals that can be invisible to casual viewers but detectable statistically.

Audio deepfakes deserve equal attention. The speech deepfake detection literature has expanded rapidly (including large surveys spanning hundreds of papers), and community challenges like ASVspoof have created datasets and evaluation tracks specifically for spoofed and deepfake speech. Practically, this matters because attackers increasingly combine voice cloning with livestream persuasion (“I’m your manager—do this now”), social engineering, and identity spoofing.

From an engineering standpoint, frame-level analysis helps because it enables anomaly detection over time:

- confidence drift (the detector is uncertain in inconsistent ways),

- face region artifacts that fluctuate across frames,

- mismatched audio/visual synchronization cues,

- compression and re-encoding “fingerprints” that deviate from expected capture pipelines.

Legal compliance is critical in video moderation, as platforms must adhere to regulations such as the EU's Digital Services Act, the Kids' Online Safety Act, and the UK's Online Safety Act. Effective moderation not only protects users but also safeguards the platform's reputation and helps avoid massive fines or legal repercussions. A single unmoderated video can result in significant penalties or even platform bans under these laws.

Platforms operating globally face the additional challenge of aligning with diverse legal frameworks and cultural expectations, which can create tensions when moderation rules seem inconsistent across regions.

But the risk-aware conclusion is uncomfortable: no detector remains “solved.” Systems need continuous evaluation against new generators and new attack styles, plus layered mitigations that do not rely on a single model’s output. Mistakes are inevitable, but how platforms respond to these errors—through transparent processes and rapid remediation—matters most for maintaining user trust and regulatory compliance.

On the Detector24 side, the company’s own product and model pages describe deepfake detection capabilities and a dedicated deepfake video model endpoint, including reported accuracy and processing characteristics (which should be independently validated in each platform’s environment, especially for livestream constraints).

False positives, bias, and responsible AI governance

Frame-by-frame AI moderation can fail in two ways: overblocking and under-enforcement. Both are harmful, but in different directions—overblocking chills legitimate speech and commerce; under-enforcement allows real harm and regulatory exposure. The moderation process increasingly relies on a combination of advanced ai tools, human oversight, and hybrid moderation systems to reduce bias and false positives, ensuring that both automation and human reviewers contribute to safer and more accurate outcomes.

A useful mental model is that most moderation systems operate in low-prevalence conditions (the majority of content is benign), which makes false positive management disproportionately important and complicates simplistic “accuracy” claims. Modern governance frameworks like National Institute of Standards and Technology’s AI Risk Management Framework emphasize continuous risk measurement, lifecycle monitoring, and socio-technical evaluation—because the risk is rarely just the model; it’s the model-in-system, interacting with workflows, incentives, and users. Training for moderators should focus on context, not just keywords, and companies often draft extensive and detailed guidelines for moderators to refer to before reaching a decision, ensuring diverse perspectives are considered.

On transparency and user recourse, civil society standards like the Santa Clara Principles stress notice, clear rules, and meaningful appeals—concepts that increasingly align with regulatory requirements such as DSA “statement of reasons” expectations. It is crucial to enforce platform rules while balancing free expression with content regulation, establishing transparent policies and feedback mechanisms to protect user rights. Effective moderation also includes establishing clear community guidelines and transparent processes for content removal.

For platforms operating in 2026 and beyond, the responsible target state looks like this:

- Policy mapping is explicit: model outputs map to written policies with versioning.

- Thresholds are calibrated (and different for live vs uploaded content).

- Human-in-the-loop is reserved for high-impact edges, not used as a band-aid for a weak model.

- Audit logs and “reproducible reasons” exist so the platform can explain decisions internally, to users, and to regulators where required.

Community content review works well when users are engaged and aware of the rules, supporting the effectiveness of moderation efforts and reinforcing compliance with platform standards.

Under these conditions, an infrastructure provider like Detector24 is most credibly positioned as a composable detection layer—supplying specialized moderation and identity/anti-spoofing components (e.g., age estimation, minor detection, face liveness/occlusion, deepfake video detection, and text moderation) that plug into a platform’s own policy engine, evidence store, and appeals process.

The trajectory is clear: frame-by-frame AI moderation is no longer optional. By 2026, it has become foundational infrastructure for any platform that operates at scale, distributes video at near-real-time speeds, and must prove—technically and procedurally—that it can prevent, detect, and respond to harm with measurable rigor.

Want to learn more?

Explore our other articles and stay up to date with the latest in AI detection and content moderation.

Browse all articles