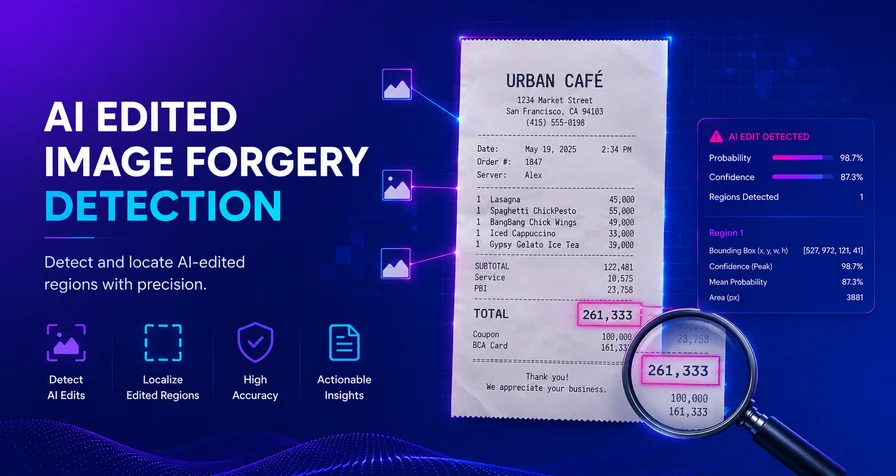

AI‑Edited Image Forgery: The New Fraud Frontier and How to Fight Back

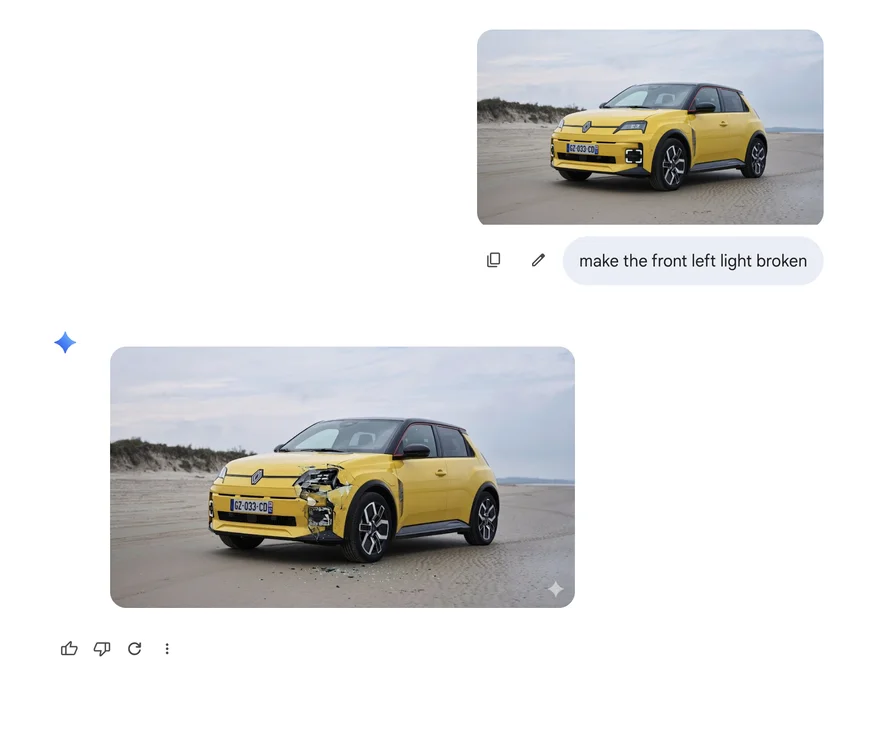

Digital images used to be easy to trust. Photos of a car accident, a scanned receipt or a driver’s licence were considered reliable evidence because editing them required professional tools and meticulous manual work. Over the last two years that assurance has evaporated. Generative models such as Google Nano‑Banana, Flux Kontext, GPT‑4o Image, Qwen‑Image‑Edit, Bagel, Step1X‑Edit and TextFlux allow anyone to perform “surgical” edits: upload a real photograph, write a sentence like “change the total to $4,800” or “add a dent to the front bumper,” and seconds later receive an image where only the specified region has been repainted. Lighting, shadows and textures match perfectly, and every pixel outside the altered region is byte‑identical to the original. The ease of prompt‑driven editing has rewired the economics of fraud, enabling scams in insurance, expenses, identity verification and even politics.

This article examines the growing problem of AI‑edited image forgery and explains how Bynn’s AI Edited Image Forgery Detection model tackles it. It draws on recent research, industry surveys and news reports to show how generative AI is reshaping fraud, why traditional detection methods are failing, and how new forensic techniques can restore trust.

Why AI‑Edited Image Forgery Matters

A surge in insurance and expense fraud

The insurance industry is experiencing a wave of image‑based fraud. A March 2026 survey by Verisk’s State of Insurance Fraud found that 98 % of insurers say AI‑powered editing tools are fueling an increase in digital insurance fraud . Over one‑third of consumers (36 %) admit they are increasingly comfortable altering claim photos or documents , and two‑thirds of insurers say digital media fraud often goes undetected . Nearly all insurers (99 %) report encountering AI‑altered documentation, and 76 % say such claims have become more sophisticated in the past year . A substantial share of Gen Z and Millennial respondents (55 % and 49 % respectively) say they would consider digitally editing evidence to strengthen a claim .

These trends are visible in expense management as well. A webinar by AppZen notes that over 3.5 million fake receipts were created on the top four expense‑fraud websites in just six months, making hyper‑realistic fraudulent documentation a massive and growing threat . Manual audits cannot keep pace with the hyper‑realistic AI‑generated receipts because they now include realistic wear, crumples, coffee stains, accurate logos and even handwritten tips, making them virtually indistinguishable from legitimate receipts . AppZen highlights examples where its AI flagged impossibly priced breakfast items and receipts from non‑existent restaurants . The scale is staggering: AppZen’s consultant notes that from four fake receipt sites alone, millions of receipts were generated in six months, equating to a massive amount of money walking out of organizations .

Identity fraud and document forgery

The problem extends beyond receipts. In early 2025, identity document fraud spiked by over 300 % in North America, driven largely by generative AI . Fraudsters have moved from crude cut‑and‑paste jobs to AI‑generated credentials and official documents—contracts, invoices and reports—that look perfectly authentic . Underground services now offer highly realistic fake IDs for as little as $15, and fraud‑as‑a‑service marketplaces sell thousands of forged documents for under $10 . A single bad actor with a laptop can spin up hundreds of convincing fakes in minutes , overwhelming manual verification systems. These documents sail through basic checks because AI can replicate fonts, layouts and security elements with near‑perfect accuracy , and the resulting files often have high OCR readability—so naive systems that equate text extraction with authenticity are fooled.

Even highly regulated identity checks are vulnerable. A 2025 demonstration highlighted by HYPR shows that a user used GPT‑4o to create a convincing digital passport in five minutes . The fake would likely pass many automated Know‑Your‑Customer (KYC) systems . Experts warn that photo‑based KYC and selfie checks are becoming obsolete because generative models can produce high‑resolution, pixel‑perfect forgeries that are indistinguishable from real documents . In such a digital environment, relying solely on an image of an ID or even a live selfie offers little protection .

Misinformation and political deepfakes

AI‑edited images and deepfake videos have entered politics. Reuters reports that the U.S. National Republican Senatorial Committee created an AI‑generated ad where Texas State Representative James Talarico appears to say phrases he never spoke; only a small “AI generated” disclaimer appears . Political strategists note that AI‑generated videos are persuasive, cost‑effective and largely unregulated . A study cited by Reuters found that people struggle to identify deepfake videos and that their opinions are influenced by them , prompting warnings that AI‑generated misinformation could erode trust in elections . Because there is no federal regulation constraining AI use in political messaging , campaigns and social media users are free to deploy synthetic content that confuses or deceives voters.

Why Traditional Detection Fails

Traditional forgery detection systems fall into two categories: manual review and whole‑image AI classification. Manual review cannot scale—finance teams approve thousands of receipts and claims a month, while deepfake content spreads at viral speed. Whole‑image AI detectors only flag whether an image looks synthetic; they cannot localize the specific region that has been manipulated. When AI editing only alters a small region (e.g., changing one digit on a receipt or adding a dent to a car), the surrounding real pixels confuse whole‑image models into thinking the image is authentic. In laboratory tests referenced on the Bynn model page, conventional AI detectors achieved only 51 % accuracy in such cases.

Generative editing also defeats static rule‑based checks. Most document verification systems rely on template matching (“if the font doesn’t match, flag it”) or OCR validation (“if the data parses correctly, accept”). AI fakes are explicitly designed to bypass these checks by matching official fonts and producing correctly structured data . As one industry executive observed, “AI has fully defeated most of the ways that people authenticate documents.” Even multi‑factor identity checks can be circumvented if they rely on a single photographic proof, as generative models can produce deepfake selfies and manipulate real-time video feeds .

The result is a detection gap. Insurers report that they are using third‑party or internal AI‑based detection tools, yet only 58 % feel confident in their ability to detect edits to photos and videos at scale . Manual reviewers, auditors and KYC agents are overwhelmed by hyper‑realistic fakes and cannot reliably spot micro‑edits.

How AI‑Edited Image Forgery Detection Works

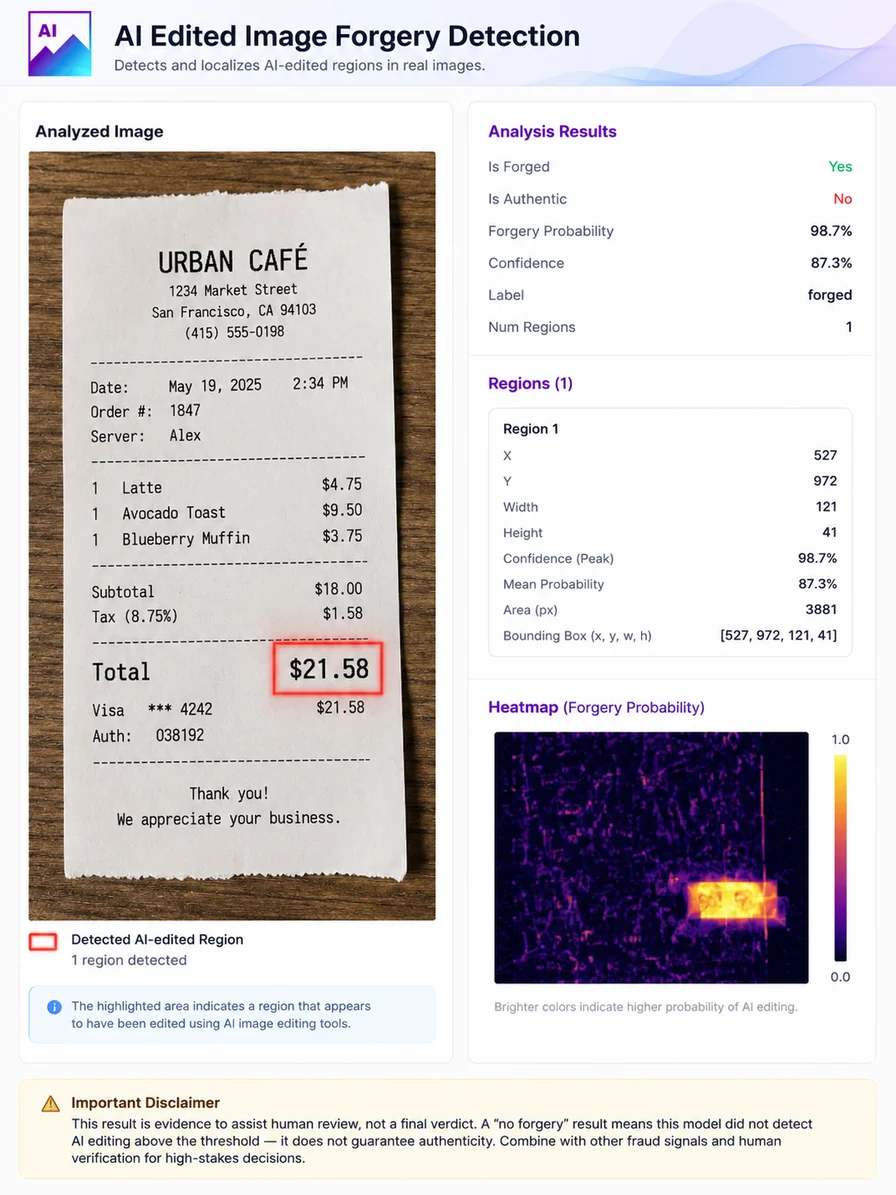

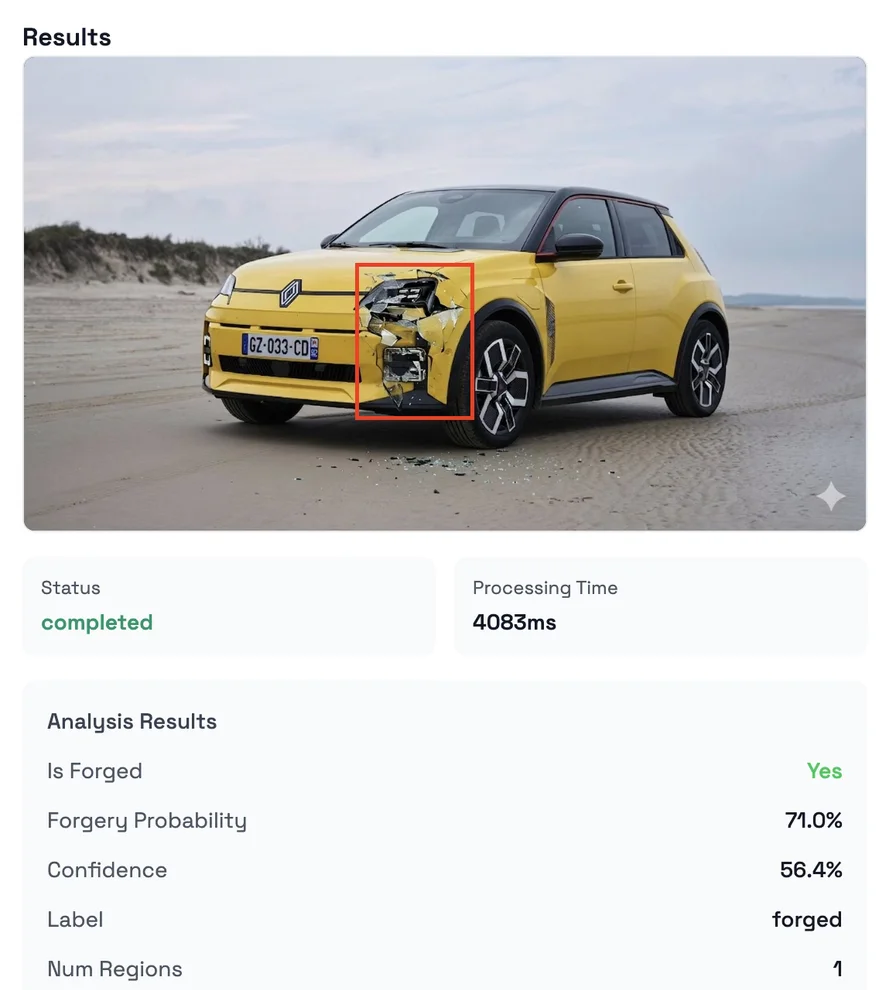

To address this gap, Bynn developed the AI Edited Image Forgery Detection model. Unlike whole‑image classifiers, this model localizes precisely which regions of an image have been altered. The system draws bounding boxes around repainted regions—whether that’s the total on a receipt, a scratch on a car, a line item on an invoice or a date on an ID—so investigators can see exactly what changed. Key innovations include:

- Triple‑stream encoding: the model processes the image through three parallel deep encoders—a main RGB branch, a frozen RGB reference branch and a noise branch that operates on a high‑pass filtered view to expose editing residuals invisible in the RGB domain. This design captures both high‑level structure and subtle low‑frequency artifacts.

- Contrastive feature alignment: during training, an asymmetric contrastive loss pulls authentic pixels together in feature space and pushes edited pixels apart, even when the visible difference is microscopic. This encourages the network to separate genuine and forged pixels.

- Pixel‑level segmentation: fused features feed a four‑convolution head that produces a per‑pixel forgery probability map. A threshold of 0.40 (calibrated for the best F1 score) binarizes the map, creating a mask of potentially edited pixels.

- Region extraction: connected components in the binary map are emitted as bounding boxes with per‑region peak and mean confidence scores, allowing reviewers to navigate from “this image was edited” to “this specific 41×121‑pixel area was repainted”.

- Editor‑agnostic generalization: the model is trained across nine editor families—Nano‑Banana, Bagel, Kontext, GPT‑4o, Qwen‑Image‑Edit, TextFlux, Step1X‑Edit, Gemini and Ideogram‑v2—enabling it to detect edits from multiple AI image editing tools. As a result, it learns the concept of “edit” itself rather than memorizing patterns from a single editor.

These design choices translate into strong performance. Across all editors, the model achieves an F1 score of 0.7447, a precision of 0.7429 and a recall of 0.7464 with an Intersection over Union (IoU) of 0.5932. Per‑editor results vary: F1 scores exceed 0.86 for Qwen‑Image‑Edit and 0.80 for TextFlux and Gemini+Ideogram, while performance on GPT‑4o edits is weaker (F1 ≈ 0.57). Overall, the model accurately isolates most AI‑edited regions.

Response structure and integration

The model returns a JSON response that includes fields such as:

is_forged: whether any edited regions were detected.forgery_probability: confidence that the image contains AI‑edited regions, calibrated for F1.num_regions: number of localized edits.regions: an array of bounding boxes with normalized coordinates and per‑region confidence scores.

This structure allows downstream systems to automatically highlight edited areas, route suspicious documents for further review or cross‑check specific fields against metadata or text extractions.

Use Cases: Where Localized Detection Matters

Insurance claims

Fraudsters can easily add dents or hail damage to a photo of a vehicle or house. They might raise the height of floodwater in a basement or erase evidence of prior damage. Adjusters often lack access to the original image for comparison, and manual review cannot catch micro‑edits. The Bynn model pinpoints manipulated regions so that claim adjusters can ask for additional evidence or reject fraudulent claims. When combined with AI‑generated image detection (to catch fully synthetic images) and metadata checks (e.g., verifying camera model, geolocation), insurers can significantly reduce losses.

Expense fraud and accounts payable

A consultant inflates an $80 client dinner to $480 by changing one digit; a contractor raises line-item totals by 15 %; a traveler shifts check‑in dates on a hotel receipt. Because AI edits only modify specific numbers, the rest of the document appears authentic. The localized detection model isolates these altered regions and can be paired with OCR to cross‑check amounts and dates against policies. This approach complements systems like AppZen’s multi‑layered fraud detection, which combines digital fingerprinting, pattern recognition, merchant authentication and mathematical validation to catch fake receipts .

KYC and identity verification

Changing a birth date on a passport, swapping a photo on a driver’s licence or altering an address on a utility bill can enable criminals to bypass age restrictions or open fraudulent accounts. The Bynn model highlights the exact fields that were edited, allowing identity verification systems to flag suspicious documents for secondary checks. However, experts emphasize that document-centric verification alone is no longer sufficient; it should be part of a multi-factor identity strategy that includes device integrity, network context and simultaneous validation of multiple signals .

Vendor invoices and accounts payable

Businesses can be defrauded when vendors submit invoices that look genuine but have been slightly altered—e.g., amounts increased or payment terms changed. Localized forgery detection highlights the specific line items that were modified, enabling accounts payable teams to compare them against purchase orders and detect anomalies.

Marketplace and content moderation

Online marketplaces and dating platforms rely on user‑submitted images. Sellers may remove scratches from products or alter brand labels; users may airbrush their appearance. Newsrooms may receive photos where a person or object was quietly removed. The Bynn model can flag edited regions, helping moderation teams maintain trust and comply with platform policies. For news organizations grappling with misinformation, localized detection can uncover where an image has been manipulated and support fact‑checking.

Legal and forensic analysis

In court proceedings, images are often introduced as evidence. Being able to pinpoint edited regions is critical to determining admissibility and establishing whether evidence has been tampered with. The model’s bounding boxes and confidence scores provide an audit trail for forensic experts and attorneys.

Limitations and Best Practices

While Bynn’s model advances the state of the art, it is not a panacea. Known limitations include:

- Compression: aggressive JPEG compression (quality < 60) destroys the high‑frequency residuals the model relies on, reducing accuracy.

- Style transfer and whole‑image edits: edits that affect every pixel (e.g., style transfer) leave a uniform signal and are better caught by whole‑image AI‑generated image detectors. For purely synthetic images, Bynn offers a separate AI‑Generated Image Detection model.

- Editor coverage: the model is trained across nine editors up to January 2026. Novel editors may leave different residual patterns; generalization should be tested.

- Weaknesses on GPT‑4o: performance on GPT‑4o edits is relatively lower (IoU ≈ 0.38), so additional fraud signals may be needed for those cases.

- Screenshots: documents captured via screenshots can lose compression signatures, reducing localization accuracy.

Furthermore, probability scores are not legal proof; decisions with high stakes should always involve human review. False positives can occur—heavy filtering or unusual textures may produce spurious regions

—so there should be an appeal path. For the most robust workflows, organizations should combine multiple signals: AI‑generated image detection to catch fully synthetic images, document tampering detection, OCR field checks, metadata analysis and device/network signals. In identity verification, a multi‑factor approach that simultaneously validates device trust, location context and document forensics dramatically increases the cost of fraud .

Conclusion: Building Trust in the Age of Generative Editing

Generative AI has democratized image editing, collapsing the barrier between amateur and professional forgers. A prompt typed into a model like Qwen‑Image‑Edit or GPT‑4o can quietly alter a receipt, a claim photo, or an ID in seconds, fueling insurance fraud, expense abuse, identity theft and political misinformation. Surveys show that consumers are increasingly comfortable manipulating images, and insurers and auditors are struggling to keep up . Traditional verification methods fail because they either rely on manual inspection or classify an entire image without localizing edits. In this environment, detection must evolve.

Bynn’s AI Edited Image Forgery Detection model addresses this challenge by providing pixel‑level forensics. Its triple‑stream encoder, contrastive training and editor‑agnostic design allow it to highlight the exact regions that have been repainted, enabling investigators to focus on the right details. When used alongside AI‑generated image detectors, digital fingerprinting, pattern recognition, merchant verification and multi‑factor identity checks, the model can close the detection gap and restore trust in digital images. In other words, the fight against AI‑driven fraud requires AI‑powered defense.

As generative models continue to improve, organizations should expect an arms race. Fraudsters will iterate on prompts and editors, while detection systems will adapt with new forensic techniques. Regulation and standards will be needed to ensure transparency (e.g., mandatory “AI generated” watermarks) and accountability for misuse. Ultimately, building trust in digital media will depend on combining technology, policy and human judgment to ensure that images still serve as reliable evidence in an age where pixels can lie.

Want to learn more?

Explore our other articles and stay up to date with the latest in AI detection and content moderation.

Browse all articles